Dear Investors.

A letter from one engineer, not a team. I have been building with AI since 2023; Critique is the focused product build in earnest from March 2026: roughly three hundred commits stand between here and general release readiness. Section 06 holds the usage, throughput, and latency signals that matter this early. What remains is the path to general release with the reliability, policy depth, and polish production teams expect. I am carrying the inference bill and looking for the right co-founders and backers to build with.

AI Change Control Platform

300+ commits to today

v4 shipped, GA next

Building with AI since 2023

I will keep this plain. No jargon layer, no dramatic mission statement. The people I do my best work with are operators, engineers, and investors who want to know what is on the screen: what ships, what breaks, what comes next.

Skim the section labels (the eyebrows), jump to what matters to you, skip the rest. If you want to talk, my email is at the top and again at the bottom.

Interactive read

Jump into the assistant — same letter, your questions

Frontier models (the flagship reasoning tiers from leading labs) are trained for long chains of thought, tool use, and careful synthesis under load, not just next-token autocomplete. That is why the lead verdict and high-stakes specialist passes default to them, while narrower subtasks can route to smaller or cheaper checkpoints. Reviews are not a single prompt: they are scout work, parallel specialists, a lead verdict, optional sandbox analysis, and sometimes Remedy loops. Each stage can pick a different model. These are four of the families teams actually select in production today.

- GPT-5.5

OpenAI · GPT-5.5 and GPT-5.5 Pro on the review router

- Claude Opus 4.7

Anthropic · ultra-tier lead and synthesis

- DeepSeek V4

DeepSeek · V4 Flash and V4 Pro (1M-class context)

- GLM-5.1

Z.AI · GLM-5.1 and GLM-5V-Turbo in catalog

Documentation and ship logs

We publish architecture notes beside the product. If you want the same mental model our engineers use for webhooks, queues, models, memory, Remedy, and credits, start with the docs, then read the founder posts for narrative and release cadence.

- Ship logPublic release notes: metering, review automation, credits—what shipped and where to verify in Git.

- Event-driven pipelineGitHub webhooks, QStash, process-delivery, and how work leaves the HTTP thread.

- Model execution engineRuntime roles, OpenRouter fallbacks, pricing cache, and how review routes models.

- Memory and retrievalRepository indexing, hybrid lexical + vector recall, and promotion into long-term memory.

- PR review lifecycleReview runs, sandbox analysis hop, checks, and what lands on the pull request.

- Remedy blueprintHow bounded fix loops, sandboxes, and handoff from findings to patches work.

- Policy fieldsInstallation and repository policy knobs teams use to tune automation.

- Billing and creditsCredits, usage events, and how review consumption maps to entitlements.

- Bot mentions and commandsHow @Critique behaves on PR threads and what operators can trigger from comments.

- PR Review v5Diff-first runs and multi-turn OpenCode: current generation of the review engine.

- PR Review v4.1Execution depth, live observability, and operator-facing controls.

- PR Review v4 betaWhen the beta hardened into something teams could trust for daily merges.

- Critique BuilderWhere natural language compiles into repository-scoped work in sandboxes.

- Remedy in Critique ChatClosing the loop from chat to patch without leaving the product.

- Cloud agents and RemedyHow remote coding agents changed, and where Remedy sits in the stack.

- GPT-5.5 and GPT-5.5 ProBenchmarks, credit economics, and when to spend on OpenAI’s latest stack.

- DeepSeek V4Flash and Pro on the router, EU inference options, and open-weights positioning.

Default docs entry: Memory system overview. Marketing site: critique.sh, Builder: /workspace?mode=build, Remedy: /remedy, Ship log: /version.

AI made writing code trivially fast. Nobody rebuilt the review layer underneath it.

Every engineering team I have talked to is feeling the same weight. Pull requests arrive in minutes instead of days. Diffs are three to five times larger than they were eighteen months ago. The most senior reviewers (the scarcest resource on any team) are burning daylight just reading the queue, let alone pushing back on it.

The workflow even has a name now. Andrej Karpathy coined "vibe coding" in early 2025: build by prompting, iterate until it runs, and often treat the internals as fungible compared to behaviour. Millions of developers work that way. It is exhilarating for prototyping. At repository scale it means more merges, thicker diffs, and more people confidently shipping AI-authored code they did not type line-by-line.

The tooling wave matches the incentives: new agents, faster edit modes, a race of vendors to help anyone ship, so more shippers hit the same branch protections every week. The surface area for "looks fine" merges expanded fast. Repos do not lack volume; they fill with plausible churn: duplicate implementations, drifting contracts, dependencies nobody audited, behaviours that satisfy CI and regress in prod. If you want a single word for that, call it slop. The symptom is review debt, not laziness.

The industry narrative around AI coding has fixated almost entirely on one half of the loop: generation. Write the function. Autocomplete the test. Explain the unfamiliar file. The other half, where a human has to decide under time pressure whether the machine's output should actually ship, gets a fraction of the attention and a rounding error of the investment.

Traditional CI was never meant to answer the harder question. CI tells you the build passes. It cannot tell you the architecture just drifted. It cannot tell you a new dependency pulled in a license you do not ship under. It cannot tell you the agent re-implemented a helper that already existed three folders over, with a subtly different contract.

The gap between what CI verifies and what a reviewer should catch used to be narrow enough for a human to close with coffee and discipline. With AI in the loop that gap has opened into a chasm, and it widens every quarter that model capability improves.

Review is an architecture, not a chatbot.

Most AI review tools have the same shape underneath the marketing: pipe the diff into one model, ask for comments, post them on the PR. The model has no memory of the repository, no map of what this change could break, and no honest way to say "I am not sure" instead of inventing a finding.

I modelled review the way a disciplined engineering team actually runs it: not one reviewer doing everything at once, but a small team with distinct roles, a shared evidence trail, and a final signatory who reads the complete case before deciding.

Every PR opens a shared investigation. Scout maps the blast radius first (callers, contracts, tests, adjacent modules) so the downstream agents understand what the diff touches, not just the characters inside it. Specialists then claim lanes in parallel: security audits secrets, auth boundaries, input surfaces; tests verifies coverage against actual risk rather than line count; architecture watches for contract drift and coupling; performance scans for algorithmic regressions on hot paths. Each specialist emits structured findings with severity, confidence, and line-level evidence, not prose.

Frontier-class models belong on the synthesizer. This year's flagship systems from the major labs trade higher token cost for multi-step reasoning, tool discipline, and the ability to hold contradictions across a long evidence pack. That is exactly what cheap completion models scramble when asked to pretend they reviewed a whole repo context. Specialists can run smaller or sharper models tuned to lanes; the lead must adjudicate conflicting signals without flattening nuance.

The lead reasoning model reads the complete evidence pack and writes a verdict: PASS, REQUEST CHANGES, or BLOCK, with the reasoning exposed on the record. That verdict can drive a required GitHub check. What the team experiences is a review squad, not a text generator, and critically a review squad that shows its work.

“I am not selling a faster comment bot. I am selling the difference between code that compiled and code that should merge.”

As AI writes more of the world's software, review becomes the only human-scale safeguard left.

GitHub reported more than 100 million developers on the platform in 2024. Every one of them is, at this point, within one click of a model that can draft working code. The bottleneck has already shifted, and is still shifting, from generation to judgment.

The tools that will matter most in the next cycle are the ones that help teams understand, audit, and trust what machines are writing on their behalf. That is not a better editor. It is not a better autocomplete. It is a layer that sits at the boundary between an engineer's laptop and a production system, and decides with evidence whether the line should cross.

Critique is not competing with Copilot or Cursor for the cursor position inside the IDE. It is competing for the gate at the edge of the repository, where code becomes a company's liability. Those two categories can coexist, and they should, but they are not the same business, and they will not have the same winner.

Listed on GitHub. Under a minute to install; a few minutes more to tune policy if you want.

Critique GitHub App

Same install surface as Copilot integrations and enterprise GitHub extensions: authorize the app, pick scopes, wire repositories once, then merges start picking up Critique checks.

- Under ~1 minute

to authorize the Marketplace install (no YAML, no runner churn).

- A few minutes optional

if you tweak specialists or strictness in the dashboard afterward.

- Defaults only

if you want Critique routing and reviewers set for you.

Authorize through GitHub Marketplace once (panel above), and merges immediately surface Critique checks without refactoring CI manifests. Dip back into dashboard policy minutes later if granular control genuinely matters; otherwise lean on presets.

In v4 every pull request opens a Change Passport: the PR-level system of record that carries provenance (human, bot, or managed agent), a persisted risk score, gate events, evidence runs, the merge-policy decision, verified-repair proof, and findings memory. Passports are the primary operator queue; evidence runs are the commit-level drill-down beneath them. The Control Board configures the Agent Firewall, merge policy, delivery, memory, and incident learnings from one surface.

Merge policy is policy-as-code. It evaluates in dry-run, warn, or enforce, lives in the dashboard or a repo file, and publishes its own GitHub check. An operator override records who allowed the merge and why, then patches the check-run status so branch protection reflects the call. The dashboard stays forensic: verdicts replay specialist attribution, the evidence bundles the lead used, prompts, plus exact model snapshots so audits can revisit any verdict.

Most workspaces start by routing review traffic through OpenRouter for breadth and predictable fallbacks. When procurement demands it, bring-your-own-agent (BYOA) configuration points the same specialist graph at whichever inference substrate you certify (hosted OpenAI-compatible endpoints, Azure OpenAI, Amazon Bedrock, private GPU fleets). GitHub still receives identical verdict artefacts; only the tokenizer bill and residency posture move between vendors.

Remedy closes the remediation loop entirely inside Critique when you choose our sandboxes: it applies bounded patches inside ephemeral environments, reruns lint and tests from the blueprint, pushes back to your branch, then stops because loop ceilings hard-cap autonomous retries.

Remedy also emits hand-off prompts distilled from scout plus specialist transcripts. Paste that packet into whichever coding agent owns the workstation (Cursor, Claude Code, Copilot, Windsurf, OpenCode, Claude desktop …) and you inherit the same structured context without surrendering execution to Critique. That is the unlock customers expect in a multi-agent stack: review output travels as a high-quality prompt, not a proprietary chat thread.

Critique Chat keeps repository-grounded answers between PRs without burning review credits. Builder turns natural language into repository-scoped OpenCode sandboxes with the same router discipline as review.

The wedge is the engineer who just merged something they were not sure about.

Adoption does not start with a procurement cycle. It starts the evening a senior engineer approves a PR they only half-read, because the queue was too long and the diff was too large, and then spends the next day fixing the bug that slipped through review.

That engineer installs Critique on one repository, on a personal account, over lunch. The first few PRs produce verdicts they can argue with, correct, and disagree with in public comments. Within a week the team has a shared opinion on where it is strict, where it is too strict, and where it is catching things human review was missing. From there it moves into the organisation's main repositories and into branch protection, not because someone signed a contract, but because removing it now would feel like turning off a seatbelt.

Critique wins on the path engineering teams already travel (bottom-up, evidence-first, friction-free) before it ever lands on the security team's radar. By the time the procurement conversation happens, the tool is already embedded in the workflow it is about to be purchased for.

What the live workload looks like as of the week this was written.

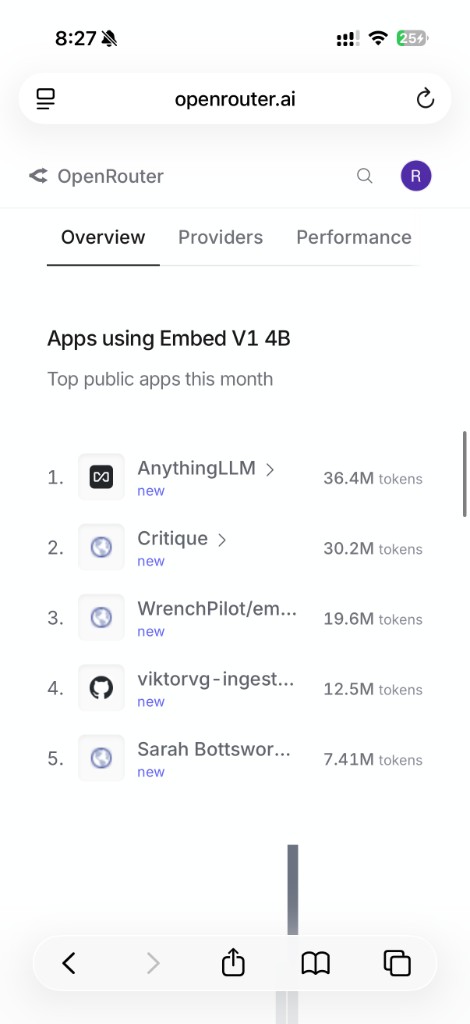

I am early. This section avoids vanity user counts and stays with the signals I actually trust at this stage: workload, throughput, and latency. Figures below tie to internal dashboards when this letter shipped.

The right early question is not "how many seats?" It is whether real repositories are pushing meaningful review volume through the system, whether the token bill is large enough to prove live usage, and whether verdicts still come back fast enough to matter in a shipping workflow.

Credits, not seats. Revenue should scale with the code, not the headcount.

Critique sells monthly credit pools instead of per-seat licenses or flat PR caps. One credit is a normalised review slice, anchored roughly to 1M input and 150k output tokens at lead-model quality. A typical PR costs between 3 and 50 credits depending on size and the model stack a team has configured for that repository.

The same shift that has teams shipping a lot more code with the same price subscription to an AI editor or model provider is exactly why usage-based review wins: more merged lines means more to inspect, and that work consumes more credits. Our revenue should rise with the volume and complexity of the code moving through the gate, not with headcount in a one-price-per-seat model that stays flat while output explodes.

This aligns revenue directly with the thing that is actually growing (AI-generated code volume) and leaves the seat count alone. Teams that review more pay more. Teams that route cheaper models for standard PRs and reserve frontier models for security-critical paths pay less per review. Critique captures the economics of model choice rather than fighting against them, which is the same structural wind that has been at Vercel's back on compute.

Standard, Pro, and Ultra tiers scale from 500 to 10,000 credits per month. Enterprise buyers get custom volume, private hosting, SLAs, and a real contract. Students and active OSS maintainers get a curated low-cost lane with unlimited repository indexing. The goal there is long-term surface area, not short-term revenue.

The beta is expensive because the product is honest.

- Model & router APIs (~72%)

OpenRouter (or BYOA) frontier plus specialist tiers: scout, embeddings, lead synthesis dominates variable burn.

- Sandboxes (~12%)

Ephemeral remediation and analysis workspaces (bounded Remedy loops, sandbox review hops). Spikes batch days.

- GitHub + platform APIs (~8%)

Private GitHub Apps traffic, ancillary provider calls, token cache misses on hot repos.

- Application hosting (~8%)

App runtime, Postgres, Redis, queues (QStash), workers, object storage, observability. Fixed-ish floor under traffic.

Rounded internal split meant to orient investors, not a GAAP attribution. Weeks with heavier Remedy or Builder batches swing the sandbox slice up; onboarding surges amplify GitHub App calls; routing teams onto cheaper checkpoints narrows API share without collapsing quality.

The chart directly above lays out illustrative spend buckets inside a frontier-default beta window: frontier router APIs dwarf almost everything else, sandbox minutes and GitHub-heavy weeks spike next, and always-on hosting for the product surface anchors the comparatively predictable residual floor. Remedy-heavy sprints widen the sandbox band; onboarding surges widen GitHub App calls. Critique is not a slideware demo. Every design partner PR fans out into scout retrieval, parallel specialists, a lead synthesis pass, optional sandbox analysis, embeddings for memory, and sometimes Remedy or Builder sandboxes on top. Each hop hits OpenRouter (or BYOA routes you configure) with real tokens, real cache behavior, and real tail latency.

Audit trail stays reproducible: model IDs on verdicts, confidence on findings, prompts replayed verbatim in dashboards. I fund inference personally until cohort retention holds. This round substitutes for that spend: frontier defaults unchanged, hardened BYOA for tighter residency envelopes, payroll for backend on queue latency, regression harness spanning Remedy and exported prompts so clipboard hand-offs match in-product fidelity.

If you invest in developer infrastructure, think of this line item the way you think about Snowflake or Datadog: usage correlates with value. I would rather align revenue with meaningful review volume than pretend a cheap summariser can stand in for a governance layer.

“I am asking for capital to fund judgment at scale, not to buy billboards.”

The window between AI velocity and AI governance is narrow, and it is closing.

The companies adopting AI coding tools in 2025 are hitting a problem their compliance, security, and engineering leadership did not have twelve months ago: they can no longer audit the throughput. The board asks what is shipping. Nobody has a clean answer. CTOs are starting to ask pointed, specific questions about provenance, responsibility, and review coverage, and realising they lack the instrumentation to answer them.

Open-source models are simultaneously closing the capability gap with frontier labs at dramatically lower cost per token. That creates exactly the conditions where an intelligent routing layer (one that sends the right model to the right task at the right cost) stops being a curiosity and becomes structurally valuable. I wired routing into the core of the review pipeline in the first week of the codebase, not as a retrofit once margins came under pressure.

I believe the next eighteen to twenty-four months will decide which tools become review infrastructure, and which become features inside someone else's platform. The teams making that decision are evaluating right now. I intend Critique to be the answer they reach for, and I am writing every commit with that outcome in mind.

Not another autocomplete. The AI change control platform the agent era forgot to ship with.

Short term (the next two quarters) is the GitHub App and the Change Passport as the system of record. I want it installed the same week a team adopts any AI coding tool, because the pain from that adoption lands precisely in the reviewer's inbox within days.

Medium term, through next year, is configurable orchestration at the policy level. Teams define the rules: which specialists run on which paths, what model quality tier, what strictness threshold, what merge policy blocks a merge versus what adds a comment. The platform enforces those rules across every PR without a human in the loop for the routine cases, so the humans stay in the loop for the interesting ones. Production incidents feed learnings back into those rules.

Long term is the change control plane for AI-authored software. The system that tells a CTO, a compliance lead, or a board that every change merged carries a passport: provenance, a risk score, evidence behind every block, a policy decision, a verified repair where one was needed, and a signature on every verdict.

What I am raising, and what I want out of the round.

Focused seed runway: eighteen months plus headcount for backend-first and ML hires before outbound sales hires. Evidence sits in live App telemetry (sections 06 and 07a), not slideware drafts.

Co-founder search runs parallel with the capital process. Profiles that fit: hardened backend ownership of async queues plus shipping taste in dev tooling ecosystems. Send repos, timelines, salary expectations.

Go-to-market is the biggest gap angels can unblock before a full hire: GitHub Marketplace discoverability for security buyers, repeatable enterprise questionnaire answers, disciplined launch scaffolding without rewriting the engineer voice. Interested operators should reply with speciality (PMM/comms/category), hourly or project appetite, plus one analogous portfolio anchor.

Use of funds is deliberately boring in the right ways: inference and reliability first (keeping OpenRouter routes warm, shaving seconds off QStash chains, hardening the review-analysis fork), then product depth (Builder GA, Remedy reliability, enterprise policy, BYOA compliance reviews), then the GTM hire once repeatable onboarding is undeniable. A dedicated slice goes toward guardrails for agent hand-off exports so customers can trust the same prompts across skills.sh style bundles and Critique verdicts.

Optimising this round around cap-table fit beats headline valuation. Prefer partners already fluent in developer tools, exits past platform incumbents, willingness to sponsor recruiting and enterprise introductions for eighteen months versus trading price for vague logo value.

Investors, potential co-founders, and engineers who have opinions on developer tooling: write directly. A short note beats a perfect one.

Book fifteen minutes

No deck, no warm-up. I can walk you through the actual GitHub App, the dashboard, and one real review on a public repo inside fifteen minutes.

If infra-level governance sits in your fund thesis, reply with capital appetite and diligence outline.

I am talking to investors who take AI governance seriously, and to engineers who want to co-found. If you have built or invested in developer infrastructure before, I would like to hear from you. A short note beats a perfect one.

Critique.sh is in active beta on the way to general release. There is a real GitHub App, a real review pipeline, and real teams using it in production. This page is a letter, not a deck and not theatre.

Written in the open, shipped the same day it was drafted.

Ask this letter anything — with the model you want

Answers combine the letter, a curated founder + LeemerChat dossier, and pointers into critique.sh essays — tuned to help you believe the thesis without inventing numbers. Not financial advice. Chats are not saved to your Critique account.

Model

Routed via OpenRouter. Provider privacy.

Quick prompts