Pricing Critique around real review cost

Solo, Pro, Team, and the new $8 bring-your-own-OpenRouter-key harness exist for one reason: review quality should scale without hiding the model bill.

Pricing reset.

Bundled credits when you want one invoice. BYOK when you want direct OpenRouter billing.

critique.sh

$8 for the harness.

Models at your cost.

Bring your own OpenRouter key when you want direct token billing and still want Critique to run the review system around it.

Sandboxes, OpenCode orchestration, chat streams, GitHub checks, retrieval, encrypted key storage, and usage ledgers.

Lead model, specialist sub-agents, fallbacks, transcription, and chat tokens are charged by OpenRouter directly.

Checkpoint can stop weak or risky PRs before the expensive review swarm starts, preserving credits or BYOK budget.

Use bundled credits when you want one predictable Critique invoice instead of direct provider billing.

This update exists because pricing was becoming the product bottleneck. Critique reviews are not small single-prompt comments anymore. A serious review can run Checkpoint first, build repository context, route a lead model, fan out specialist sub-agents, run sandbox-native OpenCode, capture a live OpenRouter usage ledger, and then synthesize a final verdict into GitHub. That is the right direction for quality. It is also the wrong workload for vague low-price flat plans.

The old instinct would be to keep the landing page simple and hide the economics behind a friendly number. That breaks down quickly in code review. A tiny typo PR and a 30-file architecture PR do not have the same cost profile. A cheap specialist model and a frontier lead do not have the same vendor bill. A review that gets blocked by Checkpoint before the expensive pass should not cost the same as a full sandbox investigation.

The margin problem we needed to stop pretending away

A $10-ish all-in plan sounds attractive until it meets real PR review usage. It can work for a few lightweight runs. It does not work once users start running meaningful reviews across active repositories, especially when those reviews use multiple models or sandbox execution. Due to credit gates and model floors, we can still avoid catastrophic loss on the worst cases, but that is not the same as building a healthy product.

If a plan is too cheap, the product learns bad habits. You start discouraging the best runs. You make the UI vague. You hide model choices. You turn off expensive routes by default. You explain away usage instead of improving it. That is exactly the opposite of what Critique should be: a review system that is candid about cost because it is serious about quality.

The new bundled tiers

Use these when you want Critique to handle the model bill and present one predictable product invoice.

| Plan | Price | Monthly credits | Best fit | |

|---|---|---|---|---|

| Solo | Solo | $19/mo | 750 | Founders, solo maintainers, and small repos that need real review without seat pricing. |

| Pro | Pro | $49/mo | 3,000 | The default for teams running Critique on meaningful PRs every week. |

| Team | Team | $149/mo | 10,000 | Higher-volume teams that need frontier models, org guardrails, and a bigger review budget. |

Free installs keep a 100-credit evaluation lane so you can test Checkpoint, chat, and a handful of efficient reviews before committing.

Solo and Pro share the standard model catalog. That means the lead reviewer and specialist sub-agents can be swapped across the same model pool. Team adds the expensive frontier lane: GPT-5.2 Pro, GPT-5.5 Pro, and Claude Opus 4.7. That distinction matters because the model is not a cosmetic setting. It is the dominant cost driver once reviews become deep.

The price points are intentionally not trying to win the cheapest-logo contest. They are trying to make good review sustainable. A plan that cannot survive users running the product properly is not generous. It is fragile.

How the credit formula works

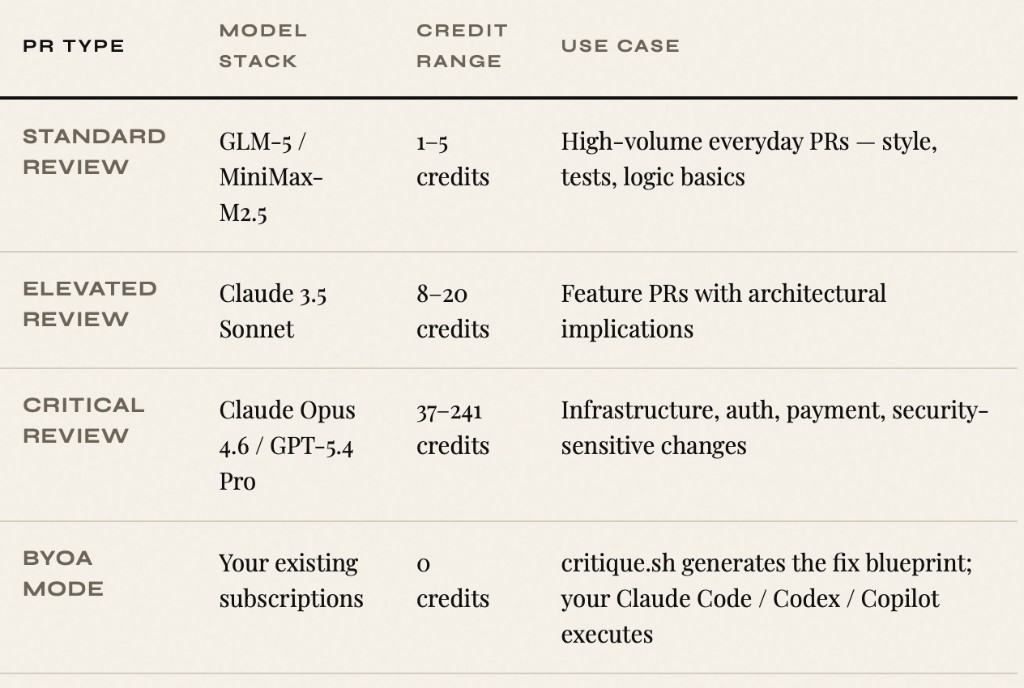

The core formula is still simple: review credits equal the lead model floor plus the specialist sub-agent floors, multiplied by PR depth. Tiny PRs should be cheap. Large PRs should be more expensive. Stronger models should cost more than efficient flash models. The value of the system is that those rules are visible before you blindly run another expensive job.

A review is not a single fixed unit. It is a bundle of model work scaled by depth.

| Input | Meaning | Why it exists | |

|---|---|---|---|

| Lead model floor | Lead model floor | The main reviewer and final synthesis pass. | Different lead models have different cost and capability profiles. |

| Specialist floors | Sum of sub-agent floors | Security, tests, architecture, performance, and code quality specialists. | You should be able to trade breadth, model strength, and cost deliberately. |

| Depth multiplier | 0.75x to 2.0x+ | Changed files, context size, reruns, and escalation depth. | A tiny PR and a sprawling refactor should not burn the same budget. |

| Low-balance gate | 25-credit default floor | PR review can stop before expensive work when the account is too low. | It is better to block cleanly than start a doomed run and surprise the user later. |

The runtime usage ledger still captures tokens and model attribution. The formula gives the user a predictable front-door model; telemetry keeps the backend auditable.

This is also why Checkpoint is not a side feature. Checkpoint is economic infrastructure. It evaluates contributor trust, PR shape, changed files, commit history, and policy overrides before the expensive review swarm starts. If it blocks a weak PR in block mode, that saves money as well as attention.

Why BYOK is separate instead of just another credit tier

BYOK solves a different problem from bundled credits. Some teams do not want Critique to resell model usage at all. They already have an OpenRouter account, they already understand which models they want, and they would rather see the raw provider bill than buy a larger Critique pool. For those teams, the honest product is not a bigger bundle. It is a cheaper harness fee.

That is why BYOK is $8/month. The $8 is not paying for model tokens. It pays for the product system around those tokens: spinning up E2B sandboxes, running OpenCode inside them, streaming chat, writing GitHub checks, loading repository context, keeping usage logs, routing webhooks, storing the OpenRouter key encrypted at rest, and making the whole workflow feel like one review product instead of a pile of scripts.

How BYOK works in the app

There is now an OpenRouter key panel in Settings. Save a key there and new Critique Chat calls prefer your key. Sandbox-native OpenCode PR review also receives your key and marks usage as externally billed. Critique still records the telemetry, call IDs, stages, and token counts where available, but the credit ledger records zero Critique credits for those BYOK model calls because OpenRouter is the billing source.

This matters operationally. BYOK cannot just be a pricing-card label. The backend has to know which key was used. The sandbox has to receive the right key. The OpenRouter proxy has to tag rows correctly. Usage events have to say externally billed instead of quietly draining the user credit pool. Otherwise BYOK is just marketing copy with a bug attached.

What we are differentiating on

Most coding products try to differentiate by saying they have a better model, a faster bot, or a nicer chat box. That shelf life is short. Model access commoditizes. Chat UIs converge. The durable differentiation is the review workflow around the model: Checkpoint before spend, multi-model routing during review, sandbox execution when static context is not enough, a usage ledger after the run, and Remedy when the user wants the system to attempt a fix.

Checkpoint is especially important because it changes when the product chooses to spend. A normal review bot starts at the diff and burns tokens immediately. Critique can first ask whether the PR deserves the full swarm. That means fraud, drive-by AI slop, giant risky changes, untrusted contributors, and policy-violating branches can be handled before the expensive work begins.

BYOK strengthens that positioning rather than weakening it. If your team wants direct provider control, we should not force you into our bundled margin. If your team wants procurement simplicity, the bundled credit tiers are still there. Either way, the product remains the same: a structured review system, not a single model wrapped in a comment bot.

Who should choose which path

- 1Choose Solo, Pro, or Team if you want one Critique invoice.Bundled credits are better when finance wants predictability, your team does not want to manage provider keys, or you want Critique to absorb the model-routing details.

- 2Choose BYOK if model usage already belongs in your OpenRouter account.BYOK is better when your review volume is high variance, you want raw model-cost visibility, or you already have OpenRouter controls, limits, and reporting in place.

- 3Use Checkpoint no matter which billing path you choose.Checkpoint is the spend-control layer. It can stop weak PRs before either Critique credits or your OpenRouter account gets hit by a full review run.

The bigger point

AI code review pricing has to stop acting like every pull request is the same. Some PRs need a cheap pass. Some need a panel of specialists. Some should be blocked before review. Some deserve sandbox execution. Some teams want one bill. Some teams want direct provider billing. The product should support those realities instead of flattening them into a number that only looks good until users behave like serious users.

That is what this update is trying to do. Bigger bundled credit pools for normal users. A 100-credit evaluation lane for new installs. A low-balance gate before expensive review work starts. A clear credit formula. A public pricing page that explains the model. And an $8 BYOK harness for teams that want Critique the system, not Critique the model reseller.

Choose the billing model that matches your review volume.

Use bundled credits if you want one invoice. Bring an OpenRouter key if you want direct model-cost ownership. Either way, Checkpoint, chat, sandbox review, and GitHub orchestration stay inside Critique.

View pricing