A fairer credit system for Critique

The review unit is now 10x larger, token-heavy PR reviews are dramatically cheaper, the pricing page reflects the new math, and every user gets +1,000 credits to test it properly.

Fairer credits.

Bigger unit. Cheaper deep reviews. +1,000 credits for everyone.

critique.sh

The old system was directionally right and operationally wrong. It gave us transparent accounting, but the standard review unit was too small for how Critique actually gets used now. As review runs became more context-heavy, more sandbox-aware, and more willing to read the surrounding repository instead of only the patch, token counts rose in exactly the scenarios where the product was doing its best work. Cheap models stayed cheap per token, but users still saw credit burn that felt harsher than it should have.

That mismatch matters. Pricing is part of product trust. If a user opens a 5M- or 10M-token review on a low-cost model and the bill feels inflated, they do not care that the ledger is internally consistent. They conclude the product is expensive in practice. That is the part we fixed.

And plainly: we should have changed this sooner. If you used Critique over the last stretch, opened a serious review, and felt the credit burn was sharper than the value you were getting back, that was a reasonable read. You were not misunderstanding the product. The system was showing a real mismatch between our accounting unit and the way the product had evolved. We are sorry for that.

What changed in the math

The old normalized unit was roughly 100k input tokens plus 15k output tokens. The new normalized unit is roughly 1M input plus 150k output. Same shape, 10x larger denominator. We did not flatten the system into a fake flat-fee product. Critique still meters real work. But we recalibrated the work unit to match the depth of modern review runs instead of an older, lighter-weight assumption.

Same model ladder. Same idea of usage-based review. A much fairer denominator for token-heavy runs.

| Metric | Old system | New system | |

|---|---|---|---|

| Standard input unit | 100k tokens | 100k tokens | 1M tokens |

| Standard output unit | 15k tokens | 15k tokens | 150k tokens |

| 5M input on a 1-credit model | ~50 credits | ~50 credits | ~5 credits |

| 10M input on a 1-credit model | ~100 credits | ~100 credits | ~10 credits |

| Frontier model floors | Still premium | Still premium | Still premium |

| Chat review credits | 0 | 0 | 0 |

The practical billing formula still multiplies model floor by usage units. We changed the review unit size, not the idea that deeper work should cost more than lighter work.

That distinction matters. A lot of pricing resets quietly hide the actual change behind a prettier number. We are not doing that. The meter still tracks real usage. Lead and specialist choices still matter. Big, expensive models still cost more than small, efficient ones. We simply resized the base review unit so the final bill matches how a modern Critique run behaves under real repository load.

What was wrong with the old system in practice

The easiest way to say it is this: the old unit was calibrated for a lighter product than the one we now ship. Earlier Critique runs were closer to a structured diff review. Current Critique runs are much closer to a repository investigation. They look at more files, synthesize more evidence, and make more use of sandbox-collected context. That is a better review. It is also a larger token footprint by design.

Under the old ratios, that larger token footprint could make efficient models feel inefficient. A low-cost model might still be cheap at the vendor layer, but once you divided the run by a too-small review unit, the credit result could still look punitive. The accounting was consistent. The user experience was wrong. Those are not the same thing.

What did not change

Cheap models are still the cheap lane. Premium models are still premium. If you choose GPT-5.5 Pro or Claude Opus 4.7 as your lead, you are deliberately choosing a more expensive run because the model itself is more expensive. That remains true. The fix here is narrower and more important: long-context review on efficient models should feel efficient on the bill too.

Why we changed it now

Because the product got better and the pricing unit did not keep up. Critique is not reading a diff in isolation anymore. Reviews increasingly pull more repo context, more adjacent files, more hidden sandbox usage, and more synthesis across specialists. That is the whole point of the product. If the product becomes more rigorous while the pricing unit stays stuck in an older world, users get penalized for using the thing the right way.

There is also a psychological reason to make the change clearly and publicly. Engineers will forgive complexity faster than they forgive unfairness. A pricing system can be detailed if it feels honest. It cannot feel arbitrary. The old ratios were starting to feel arbitrary on large runs. We would rather reset the unit now than spend months explaining away a system that users were correctly finding too sharp.

There is an operational reason too. Once a product starts processing serious review volume, every small accounting flaw becomes visible. At low volume, a pricing edge case looks like a rare annoyance. At scale, it becomes a habit-forming objection. People start routing around the product. They downgrade their review depth. They avoid certain model combinations. They second-guess whether to open a big run at all. We would rather absorb the correction cleanly than let that behavior calcify.

What we learned from this

First: pricing copy and metering logic have to move together. If the backend changes and the frontend still teaches the old mental model, users lose trust even when the raw bill improves. Second: a usage-based system has to be re-tuned when the product itself becomes more capable. Better review quality often means more context. More context means more tokens. If you keep the old denominator forever, you eventually turn product improvement into a billing penalty.

Third: when users tell you a system feels too sharp, listen before you explain. It is easy for a builder to say, technically, that the math is correct. That is often beside the point. The right question is whether the system is aligning incentives with the product behavior you want. In our case, it was not. That is why this post exists.

Frontend and pricing page cleanup

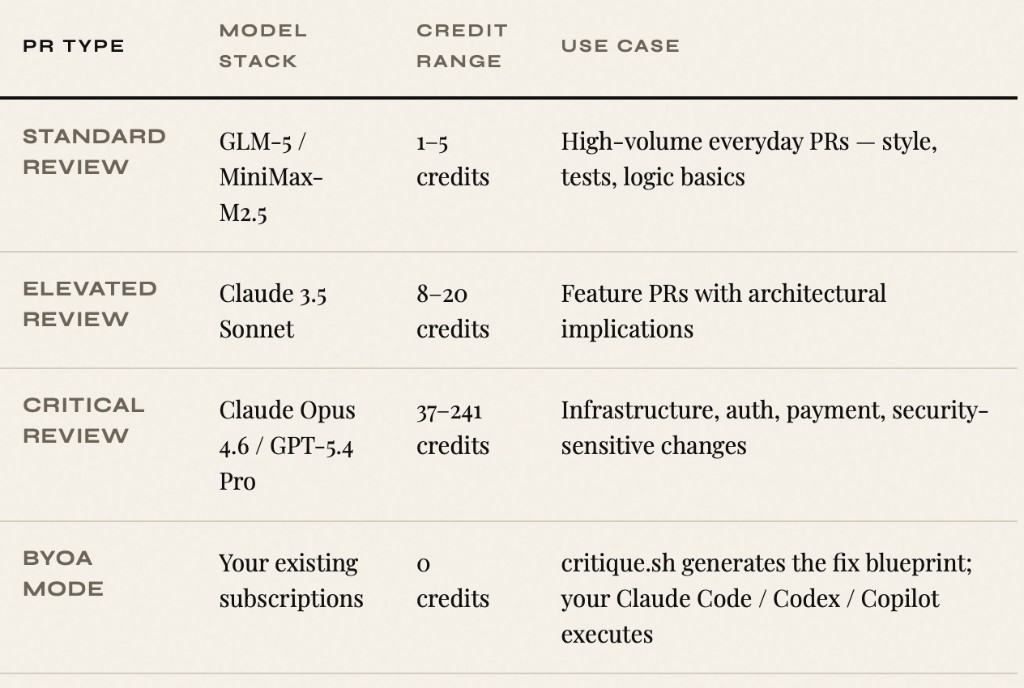

We updated the pricing page to reflect the new standard unit directly. The FAQ, model guide, and machine-readable pricing page now all describe one credit as roughly 1M input and 150k output tokens for a normal lead-model review slice. We also added a clear bonus strip so users can see, in one place, both halves of the change: cheaper deep reviews and the +1,000 credit celebration grant.

That sounds cosmetic. It is not. If the frontend still teaches the old math after the backend changes, support load rises instantly and trust falls just as fast. Pricing copy is infrastructure too.

We also wanted the page to do a better job of framing the reset emotionally, not just mathematically. This is not a hidden tweak in a changelog. It is a public correction. The pricing page now says that clearly: deep reviews got cheaper, and we are giving everyone enough extra headroom to verify that for themselves.

To celebrate: +1,000 credits for every user

The right time to ask people to trust a fairer system is when they have enough room to test it. So every user is getting +1,000 bonus credits. Not a coupon, not a lottery, not a hidden support-only adjustment. Just more room to run real reviews, compare model lanes, and feel the new economics on actual work instead of a contrived demo.

What comes next

This reset fixes the biggest visible mismatch, but it is not the end of billing work on our side. We still want tighter floor-aware gating across more surfaces, better visibility into how multi-step runs burn credits, and eventually storage that preserves fractional credit values more faithfully all the way through the ledger. The goal is not just cheaper reviews. The goal is a system that stays legible as Critique gets more ambitious.

For now, the simple version is enough: the old system had become too harsh on deep runs, we corrected it, the pricing page now reflects the new reality, and every user has another 1,000 credits to pressure-test the change. If you were one of the people who pushed us to fix this, thank you. You were right.

See the new economics live

Open the pricing page, inspect the updated credit guide, and then spend the extra 1,000 credits on real work.

Open pricing